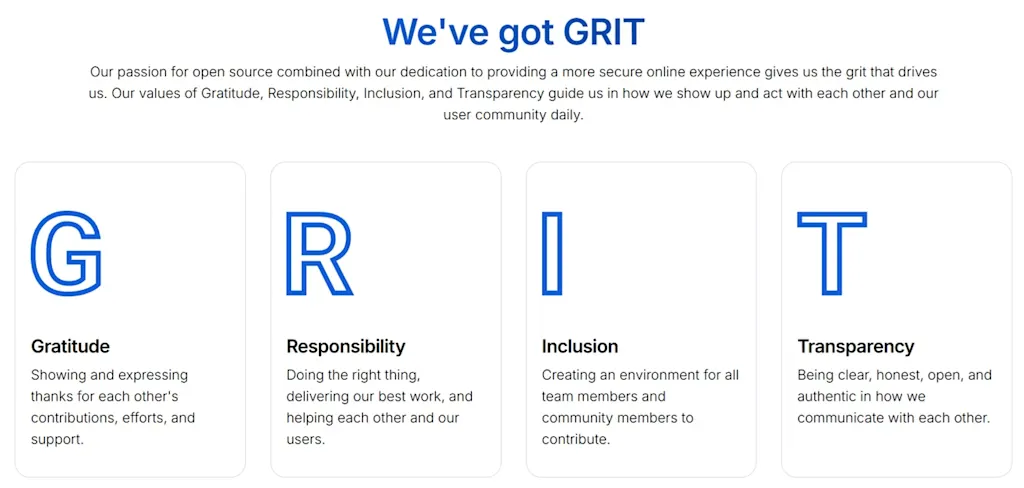

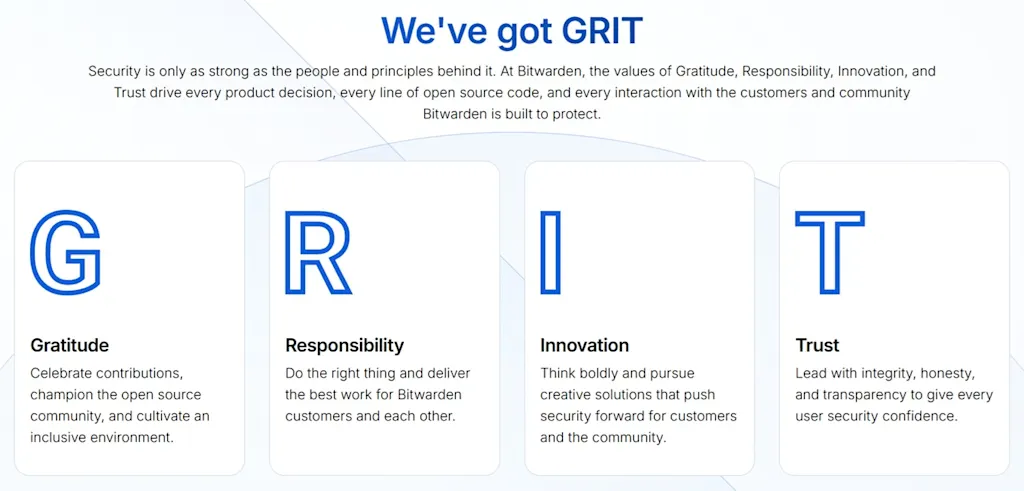

The company has long defined its values with the acronym “GRIT,” which used to stand for “Gratitude, Responsibility, Inclusion, and Transparency.” After May 4, it changed the acronym to stand for “Gratitude, Responsibility, Innovation, and Trust.”

It’s not as bad as the headline seems. Transparency is still in the motto. The actual change is:

But still. Why change it at all? Why replace “inclusion” with “innovation”?

It smells like Tech Bro.

There’s just no way to spin that positively, even giving them the benefit of the doubt, especially since they aren’t rolling it back. Someone spent effort to make that values change, so its not an accident nor a “nothingburger”.

That’s a great point.

I don’t want to trust them either. I don’t want to have to.

The only “devil’s advocate” argument I can think of is they’re trying to appeal to enterprise clients (who would not know that and want to “trust” a security company). That would explain the “I” change: “inclusion” (sadly) sounds political, “innovation” is like corporate catnip. Bitwarden could be trying to attract big fish to fund development, having their cake an eating it.